This is a comparison; if the 10 years old server hardware can keep up with today’s desktop computers. The nested virtualized lab seemed better on the Desktop.

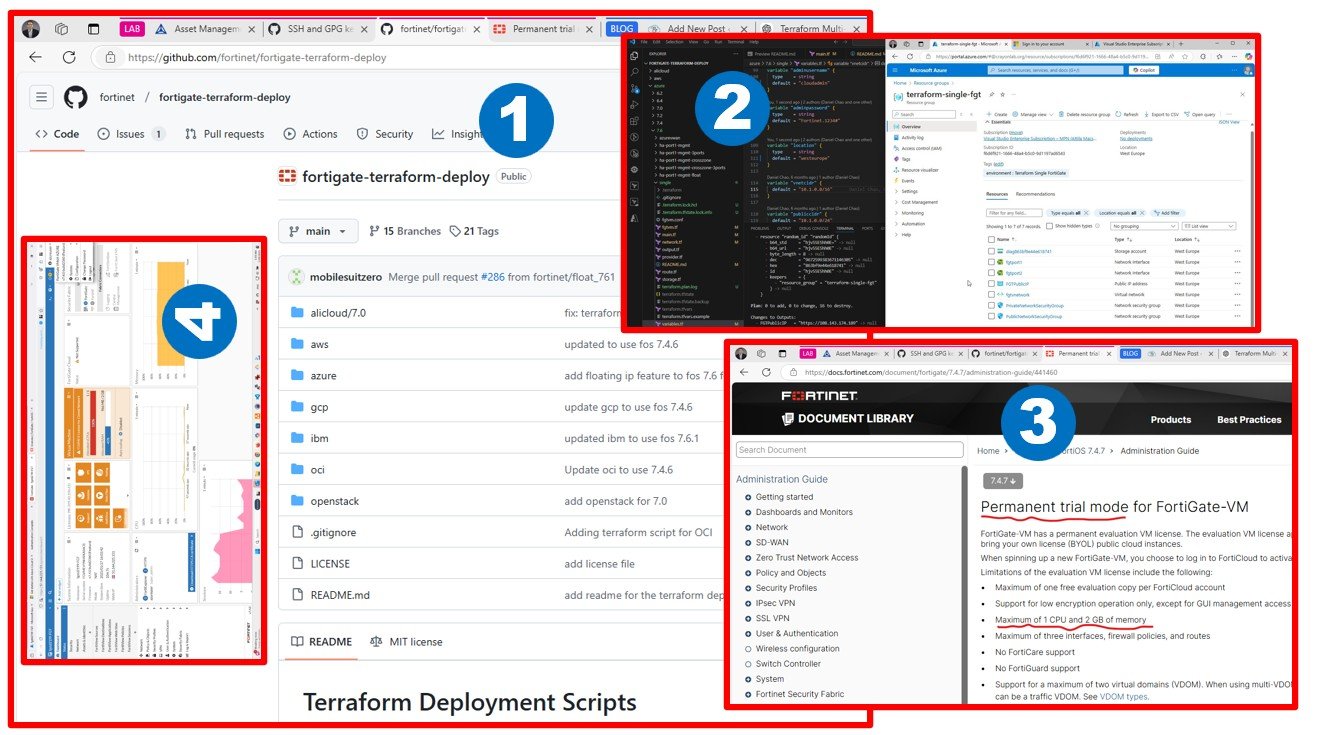

As I mentioned in my previous blog posts, I am keen to find the best solution to design and build a sophisticated VMware LAB (as a source system) to test Cloud Migration tools.

My plan is to have an up and running VMware-based LAB at low cost, as a source system for Cloud Migration tests latest by October 2021. My blog might save time for you in experimenting/learning similar things.

Our journey starts with building an on-premises LAB, which requires hardware (emulating production source datacenters – even multi-tenant; for the demo-migration projects).

You should do similar attempts; unless you are born in the cloud generation* and has an unlimited budget or friends providing you access to such environments for learning (self-education) purposes. At some point later, you might need to perform cross-cloud migration tests which will not require on-prem hardware – but as of today, the source of the migration in most cases are local datacenters (includes hardware).

* Please note, Azure VMware Solution, 3 node ~minimal deployment will cost you ~24K USD/mo, this is for production or serious POCs, not for self-education. You can try VMware-hosted shared hands-on labs as well – I will do the same, soon.

I like to build LABs by myself from scratch to have full admin experience. I like to be in control of “My Cloud”, or “Your Cloud” as we used to say at VMware a decade before.

I was comparing a 10 years old server running vSphere 6.7 natively with a one-year-old workstation running vSphere 7 in a nested virtualized setup. As of today, Workstation looks like a better approach for learning/LAB purposes. Nested virtualization got a lot better over the last few years.

Please, do not buy any hardware before I finish building up my lab and confirm that this concept works as expected.

Below see my first impression after one-week testing.

| Attila is comparing | Server / x5660 / LSI RAID 12G@7K / native vSphere 6.7 boot | Workstation / 3900XT / NVMe PCIe / nested vSphere 7 on WS16 & Win10 |

|---|---|---|

| Performance | 5 – OK; with local 12Gbps connected Ent NL-SAS RAID10 | 7 – surprising good speed on NVMe Gen4, OS install, boot and work experience, all quick, no wasted time |

| Noise level | 1 – high, not recommended to work next to server – the big issue at home | 7 – low, not as quiet as a laptop, but absolutely OK near you |

| Power consumption | 5 – 400-500W depending on load, quite expensive to run 7/24, (2×6 core, 18x8GB DDR3) | 5 – similar (12 core, 4x32GB DDR4) |

| Startup time | 3 – boots like a server, i.e. slow | 10 – startup/full operation below ~30 sec |

| Hibernate option | 1 – nope | 10 – this is huge /use suspend VM or just hibernate the Windows 10 with all VMs running, continue anytime later |

| Cost of one node | 5 – ~3200 USD (~was 10K) | 5 – ~3200 USD (it’s ~one year old) |

| Can run Davinci Resolve 17 and Forza Horizon 4 | no, it’s dedicated for vSphere | yes, you can edit video or play if your memory is not full with VMs yet ? |

| Summary | Total points: ~ 20 | Total points: ~ 40 |

Rate between 1 and 10. Higher is better. Workstation looks twice good as servers. I am comparing apples with giraffes ?

This blog is not sponsored by anybody, it’s based on the devices I already own for some time. You might have similar gadgets at home. Do not let them be wasted. Use them for self-education.

I installed 10G Intel NICs (2 port) (X710) for 2 node vSAN with an external quorum, maybe later.

You will need a creator PC at some point to run Windows 10 (ups, sorry, Windows 11! is for creators). You might end up similar experience using VMware Workstation 16. I am using creator PC for other purposes, such as video edit using Davinci Resolve, leverage GPUs.

We will talk about the basic networking environment (AD/DNS/DHCP/GW) for such LAB in the next blog post. Please be patient, I know that localhost.localdomain looks as bad as self-signed certificates.